Microsoft has publicly released its Responsible AI framework that seeks to act as a guide to develop “better”, “more trustworthy” artificial intelligence.

The software giant said AI systems are the product of many different decisions made by those who develop and deploy them. From system purpose to how people interact with AI systems, firms “need to proactively guide these decisions toward more beneficial and equitable outcomes”, added the US tech company.

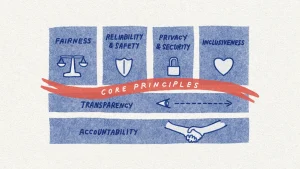

This entails keeping people and their goals at the centre of system design decisions and respecting enduring values like fairness, reliability and safety, privacy and security, inclusiveness, transparency, and accountability.

Microsoft outlined the core responsibilities of its framework which help break down a broad principle like ‘accountability’ into its key enablers, such as impact assessments, data governance, and human oversight.

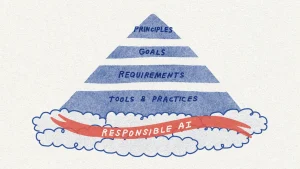

The core components of Microsoft’s Responsible AI Standard

Each goal is composed of a set of requirements, which are steps that teams “must” take to ensure that AI systems meet the goals throughout the system lifecycle. Finally, the Standard maps available tools and practices to specific requirements so that Microsoft’s teams implementing it have resources to help them succeed.

According to Microsoft, there is a need for this guidance. “AI is becoming more and more a part of our lives, and yet, our laws are lagging behind,” it stated.

This new framework builds on the first version of the standard that launched internally in the autumn of 2019, as well as the latest research and lessons learned from its own product experiences – an example being the fairness issue in speech-to-text technology.

In March 2020, an academic study revealed that speech-to-text technology across the tech sector produced error rates for members of some Black and African American communities that were nearly double those for white users. Microsoft realised that its pre-release testing had not accounted for the rich diversity of speech across people with different backgrounds and from different regions.

Microsoft learned that it needed to engage an expert sociolinguist to better understand this diversity and sought to expand its data collection efforts to narrow the performance gap in its speech-to-text technology.

In the process, it also learned the value of bringing experts into the process early, including to better understand factors that might account for variations in system performance.

Additionally, Microsoft reviewed the contentions with its speech technology, Custom Neutral Voice, and through our Responsible AI program, adopted a layered control framework.

It restricted customer access to the service, ensured acceptable use cases were proactively defined and established technical guardrails to help ensure the active participation of the speaker when creating a synthetic voice.

Through these and other controls, Microsoft helped protect against misuse, while maintaining beneficial uses of the technology.

These real-world challenges informed the development of Microsoft’s Responsible AI Standard and demonstrate its impact on the way we design, develop, and deploy AI systems.

The tech company said it has also made available some key resources that support the Responsible AI Standard: Impact Assessment template and guide, and a collection of Transparency Notes.

The Responsible AI Standard is grounded in Microsoft’s core principles

Impact Assessments have proven valuable at Microsoft to ensure teams explore the impact of their AI system – including its stakeholders, intended benefits, and potential harms – in depth at the earliest design stages.

Microsoft added that Transparency Notes are a new form of documentation in which it discloses to its customers the capabilities and limitations of its core building block technologies, so they have the knowledge necessary to make responsible deployment choices.

According to Microsoft, its updated Responsible AI Standard reflects hundreds of inputs across its technologies, professions, and geographies.

It is a significant step forward for its practice of responsible AI because it is “much more” actionable and concrete: it sets out practical approaches for identifying, measuring, and mitigating harms ahead of time, and requires teams to adopt controls to secure beneficial uses and guard against misuse.