This year’s CogX Festival, held in the vast expanse of London’s O2 Arena, boasted a diverse range of speakers and subject matter – which risked becoming overshadowed at one stage by climate change protestors who had Shell, one of the event’s main sponsors, in their sights.

Otherwise, the festival was a smorgasbord of intellectual diversity with an eclectic mix of leaders and founders, ranging from the Queen of Jordan, Rani Al Abdullah, speaking on how to lead humanely in an era of rapid technological progress, to world renowned beatboxer Reeps100’s electrifying display of integrating AI into music production.

Mythical metaphors

Comedian, broadcaster, actor and narrator of the Harry Potter books delivered a thought-provoking, albeit alarming insight into humanity’s potential future with AI.

While enterprises have been warned of the damaging impact that AI’s mimicking ability could have on their businesses, during his talk Fry revealed that he too has lost IP to digital deep fakery – with an AI version of his voice cloned and used in a documentary without his participation or permission.

Fry told the CogX audience that readings of the “Harry Potter” audiobooks were input into an AI and used to create new audio.

The Gosford Park actor warned that it wouldn’t be long before AI was used to create deepfake videos of actors without consent. “You ain’t seen nothing yet,” he said he told his agents at the time.

Fry added: “Technology is not a noun, it is a verb, it is always moving.”

“What we have now is not what will be. When it comes to AI models, what we have now will advance at a faster rate than any technology we have ever seen. One thing we can all agree on: it’s a f***ing weird time to be alive.”

While the British, Cambridge University-educated actor suggested that regulation was the way forward, he also painted a stark picture of its future. While the West might limit AI based on our values and morals, countries like Russia and China, may not impose such restrictions, he warned.

This, he said, could result in an AI “arms race,” where the West deliberately chooses less powerful “weapons”.

Stephen Fry speaking at CogX Festival

Alluding to Greek mythology, he added: It’s a question of whether we see ourselves as Prometheus, gifting fire (sentience) to humans (AI), or as Zeus, fearful of them possessing it?”

Google’s vision for a responsible future

But do things really need to be quite so binary? Couldn’t Prometheus have partnered with Zeus?

Google DeepMind’s COO, Lila Ibrahim, for one, would perhaps favour a cautious version Prometheus.

In a landscape where opinions on AI range from fear of its potential dominance to reckless optimism about its capabilities, Ibrahim’s measured voice for regulation came as a breath of fresh air.

The Google exec spoke about crafting “a responsible and revolutionary future”, her talk covering a range of topics, from sustainability and healthcare to responsible technology development within DeepMind and the role of regulators.

Her discussion was particularly timely, given UK Prime Minister Rishi Sunak’s recent announcement of his vision for the country to become a global hub for AI safety.

“AI is such a transformative technology that we have to regulate it,” Ibrahim emphasised, advocating for an international approach to AI regulation.

As a leading woman in the field, Ibrahim’s conscientious approach to harnessing the power of AI technology was both inspiring and vital in today’s rapidly evolving tech landscape.

Mental wellness

Not AI-focussed, but another highlight for the TI team was the subject of mental health in the workplace.

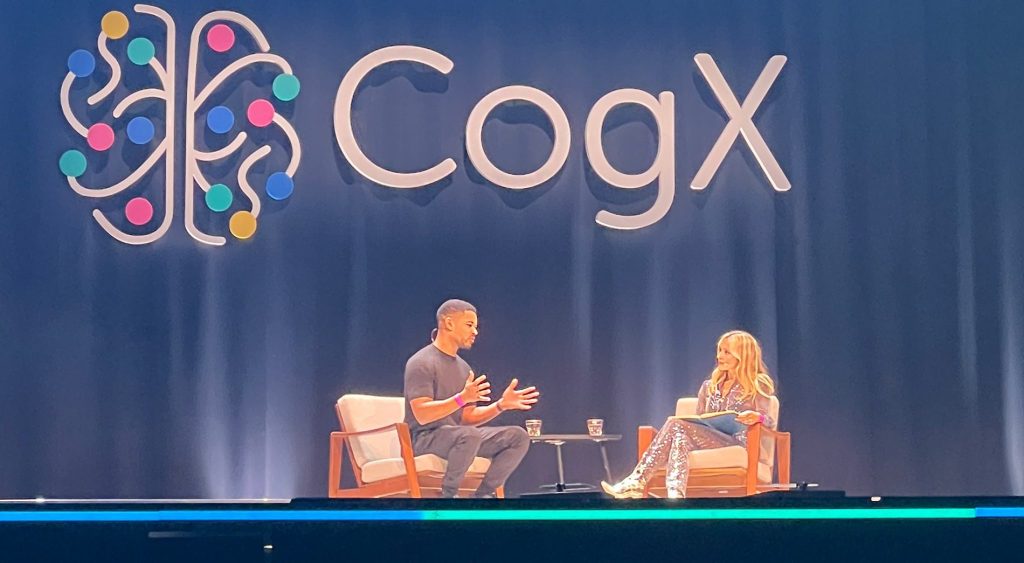

British Nigerian entrepreneur and Thirdweb founder Steven Bartlett and TV presenter and wellness influencer Fearne Cotton, offered a refreshing perspective on the subject of health and wellbeing.

Bartlett emphasised the changing dynamics of the workplace, stressing the importance of community and connection.

“The thing that keeps staff retained, focused and, most importantly, engaged is being surrounded by a group of people they like,” he said.

To back this up, Bartlett cited studies linking stress to increased mortality rates, reinforcing that a supportive community can significantly improve staff retention, focus, and engagement.

Steven Bartlett and Fearne Cotton talk about mental health and wellbeing at CogX Festival

One study, he recalled, suggested that after undergoing a significant life event, like a death in the family, you would be 13% more likely to die in the following year, unless you had a supportive community around you.

Cotton, meanwhile, shared her personal struggles with severe panic attacks, highlighting the importance of open dialogue and support in managing such issues.

Their conversation was a powerful testament to the need for businesses to prioritise the wellbeing of their teams. It served as a reminder that open, honest conversations about mental health should not be an exception, but rather a norm in every workplace.

Our very own Emily Curryer sat down for a coffee with Graham Thomas, senior technologist at Lenovo – read about what they discussed here.